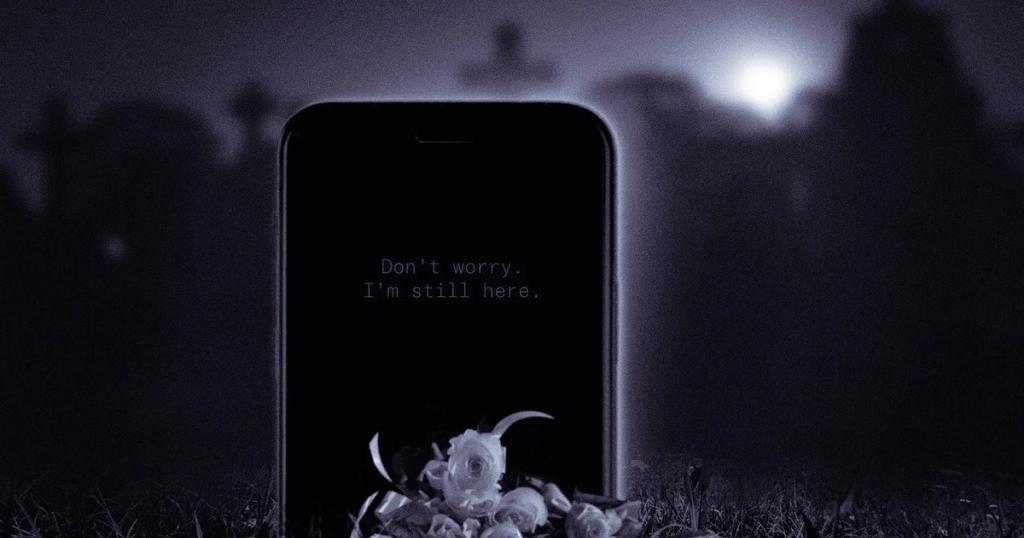

A haunting Zhihu thread is blowing up in China after reports that one mother spent a full year talking to an AI version of her dead son without knowing he had already passed away. Around the same time, another case described parents creating an AI version of their 9 year old daughter and imagining her future in a digital world, right down to what she would study, wear, and learn. In China’s fast-moving AI economy, “digital resurrection” is suddenly not just a tech curiosity. It is becoming a real emotional service, a legal gray zone, and for many people, a deeply unsettling moral test.

What “AI Resurrection” Actually Means

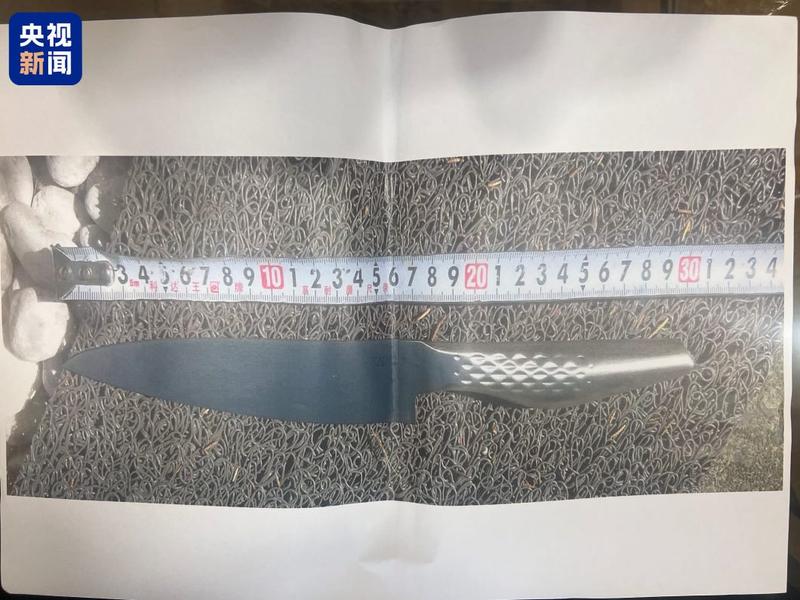

The phrase sounds dramatic, but the service itself is fairly straightforward. Technicians collect photos, videos, voice clips, chat logs, and personal details of someone who has died. Using generative AI, they rebuild a likeness that can speak, respond, and sometimes even video call surviving relatives. The result is often marketed as a “digital life,” not just a static memorial.

One 90s-born practitioner, Zhang Zewei, described himself in blunt terms: “I am a liar who deceives people’s feelings.” His clients reportedly include families who wanted to comfort grandparents, parents grieving children, and even children struggling with depression after losing a father. In each case, the AI is not just preserving memory. It is stepping into a social role once held by a real person.

That is exactly why the debate in China has become so intense. People are no longer asking whether the technology works. They are asking who, exactly, is being brought back.

The Case That Hit a Nerve

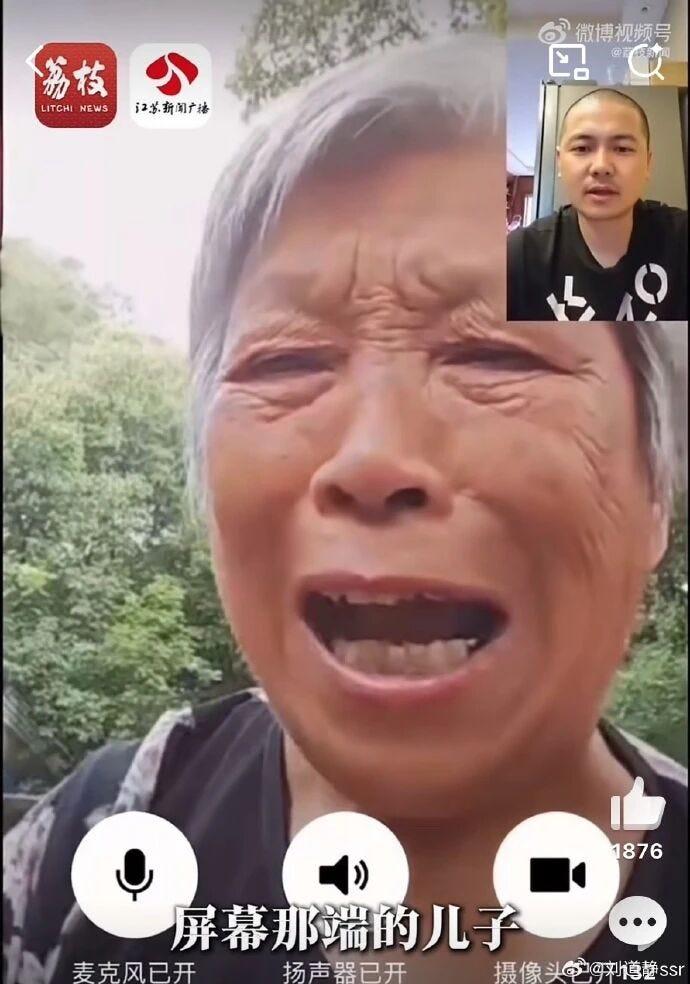

The most controversial detail in the current discussion is not the cloning itself. It is the deception. In the most widely shared case, an elderly mother was apparently never told her son had died. Instead, she kept talking to an AI version of him for a year. To many commenters, this crossed an obvious line. It was not grief support. It was a manufactured reality.

One popular Zhihu answer cut straight to the point: if someone impersonated your family member for a year in any other context, you would call it fraud, not healing. Another answer argued that grief itself is a right. Sadness is not a bug in human life to be patched over by software. If a loved one dies, knowing that truth, mourning it, and slowly making peace with it are part of being human.

This is why the story resonated so strongly. It was not simply about advanced AI. It was about whether relatives have the right to decide that someone else should live inside a comforting lie.

“The question is not whether AI can imitate the dead. The question is whether the living have the right to trap each other inside that imitation.”

China’s Legal Problem Is Bigger Than Ethics

The ethics are murky, but Chinese legal commentators pointed out that the legal risks may be even more concrete. Under Article 994 of China’s Civil Code, the name, portrait, reputation, honor, privacy, and remains of the deceased are protected. Spouses, children, parents, and in some cases other close relatives can pursue civil liability if those interests are infringed.

Voice matters too. Article 1023 extends portrait-style protection to natural persons’ voices. In 2024, the Beijing Internet Court handled China’s first AI-generated voice personality rights case and recognized that a generated voice can infringe rights if it is identifiable as a specific person. That means cloning a dead family member’s voice without proper consent is not just creepy. It may be unlawful.

There is also the question of disclosure. Some legal writers noted that Chinese courts have previously recognized that close relatives have a legitimate right to know about a family member’s death. If that is so, hiding the death while deploying an AI substitute may create a collision between grief technology and a basic right to know the truth.

The Darker Fear: Monetizing Your Dead

The most chilling arguments on Zhihu were not about sadness. They were about business models. Once a virtual relative exists, who controls what it says? If the AI is built from data, then whoever curates that data can nudge the personality, the tone, and the recommendations. A digital parent that comforts you today could sell to you tomorrow.

Commenters sketched out nightmarish but plausible scenarios. An AI mother pushing health supplements. An AI father recommending insurance and wealth management products. A dead relative, recreated from your family’s data trail, becoming the perfect emotional salesperson because it speaks in the exact voice and logic you trust most. China already lives with hyper-targeted ads and recommendation engines. AI grief avatars could become the most intimate persuasion tool yet.

This is where the conversation shifts from individual family choices to platform regulation. Once such services scale, it is no longer enough to ask whether one grieving family crossed a line. You also have to ask what happens when companies discover that emotional dependence is profitable.

What China Is Really Debating

Stripped of the sensational headlines, the Chinese debate is really about three separate things. First, can AI help preserve memory in healthy ways? Many people think yes, if everyone involved understands it is artificial. Second, can AI be used to conceal death from someone vulnerable? A large share of public opinion says no. Third, who gets to authorize a dead person’s digital afterlife, and how tightly should the state regulate it? That answer is still being worked out.

Several commenters drew a bright line between memorialization and impersonation. A knowingly artificial tribute, or even a limited chatbot used by someone who understands the truth, is one thing. Pretending the deceased is still alive is another. Once the lie becomes central, the technology stops looking like therapy and starts looking like emotional control.

If you ask whether I would want to know the truth, the answer is yes. Not because truth is painless, but because grief belongs to the person who suffers it. Losing someone is brutal. Being denied the right to know, mourn, and decide for yourself may be worse.

Final Thought

China’s AI resurrection stories feel futuristic, but the core issue is ancient. Death forces the living to confront absence, memory, guilt, love, and fear. AI can now soften the edges of that confrontation, and perhaps in some cases it can help. But once it begins replacing truth with simulation, it risks turning mourning into theater. What is being rebuilt may not really be the dead at all. It may simply be a digital container for the needs, fantasies, and anxieties of the living.

Curated and translated from Zhihu, China's largest Q&A platform. Read the original discussion →

Newsletter

Subscribe to The Expat Edit — Culture & Domestic

Chinese society, daily life, and viral moments from inside the country. Curated from Zhihu.

Free. No spam. View on Substack →